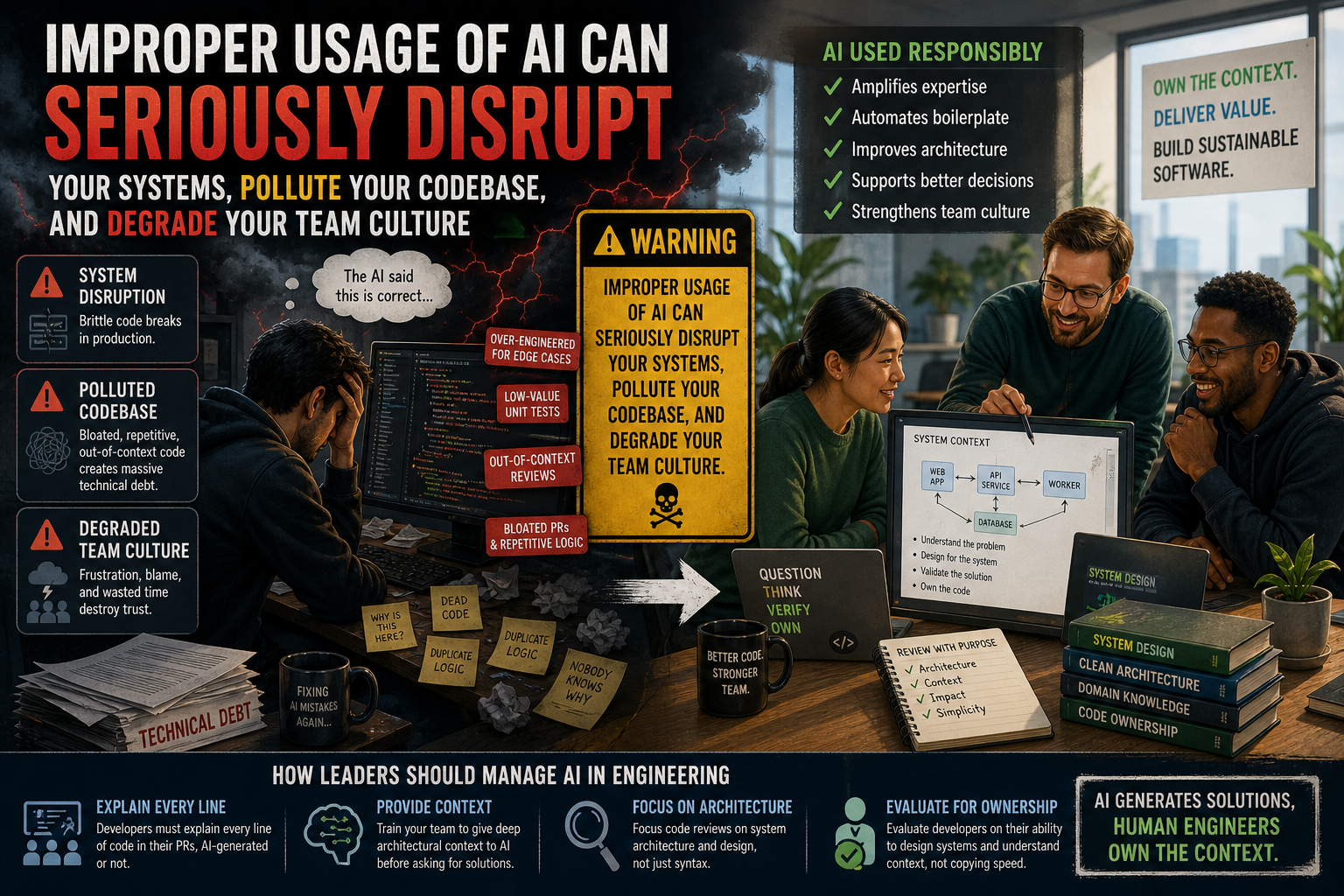

All the world is adopting AI tools like ChatGPT, Gemini, and Claude expecting a massive boost in developer productivity. The reality is much more complicated. AI is highly effective, but it exponentially increases the underlying skills of the person using it. If an engineer is experienced and understands the system, AI makes them excellent. But if a developer lacks foundational knowledge, improper usage of AI risks making them significantly worse. Instead of writing better software, teams are generating massive amounts of technical debt at unprecedented speeds.

The Multiplier Effect on Software Engineers

AI does not inherently elevate people, code, or infrastructure. It simply amplifies what is already there. Good engineers use AI to automate boilerplate, brainstorm architecture, and scaffold complex ideas. Inexperienced engineers often use conversational AI as a shortcut to write logic they do not actually understand or actually need.

An AI assistant cannot replace the architectural context that lives inside an experienced developer. This creates a dangerous scenario. An inexperienced or distracted developer pastes a prompt into a chatbot and produces code that looks syntactically correct but is fundamentally flawed for the specific use case. The AI reveals their technical debt and lack of system knowledge rather than hiding it.

The Illusion of Competence

One of the biggest risks of AI generation is overconfidence. Developers are becoming overconfident because the AI tells them they are right. They trust the generated output implicitly and stop questioning the logic.

This false confidence turns small architectural mistakes into lengthy, frustrating debates that waste hours of engineering time. When a senior engineer points out a flaw in a pull request, the less experienced developer often defends the AI-generated code. We are spending entirely too much time discussing topics or answering messages that simply do not make sense in the context of the actual product.

Common Mistakes Driven by Improper Usage of AI

When developers rely on AI without deep system knowledge, specific patterns of bad code emerge quickly. You will start seeing these issues cluttering your repositories.

- Over-engineering for phantom edge cases: AI chatbots often suggest error handling or abstractions for scenarios that will never occur in your specific execution path.

- Low-value unit tests: AI tools easily generate hundreds of tests that verify trivial logic but fail to catch meaningful regressions. This creates a maintenance burden where teams spend more time updating tests than writing features.

- Out-of-context code reviews: Pasting snippets into AI tools for review frequently flags issues that ignore the broader application state. It cannot know how a specific script is executed and whether it is even reachable in the current architecture.

- Bloated pull requests and repetitive logic: Developers submit massive blocks of AI-generated code that often include repetitive logic. Because AI models usually take the easiest path to solve a local problem, they frequently ignore existing abstractions.

The actionable takeaway is to shift the focus from speed to ownership by treating AI-generated code as a proposal that requires rigorous validation.

How Leaders Should Manage AI in Engineering

We might be tempted to restrict AI access to only our most senior developers. While restricting access completely is extreme, engineering managers must establish strict boundaries. We have to change how we train teams to use these tools.

- Require developers to explain every line of code in their pull requests, regardless of whether they wrote it or a model generated it.

- Train your team to provide deep architectural context to the AI before asking for solutions.

- Focus your code reviews on system architecture rather than just syntax.

You must evaluate your developers on their ability to design systems and understand context, not just their ability to copy and paste code quickly.

Frequently Asked Questions

Do general AI tools increase technical debt?

Yes, if used improperly. AI chatbots can generate verbose, context-blind code. If developers do not carefully review this output and adapt it to their specific system, it rapidly accumulates as technical debt.

Should junior developers use AI tools?

Junior or inexperienced developers can use AI, but they need strict supervision. It is important to note that years of experience do not always equate to being an experienced developer in a specific context. Anyone lacking the necessary system knowledge should use AI to learn syntax rather than relying on it to design complex logic from scratch.

How do you fix bad AI generation?

You fix bad AI output by improving the human review process. Enforce strict pairing sessions, mandate architectural design documents, and never merge code the author cannot fully explain.

The Missing Warning Label

Perhaps it is time we treated AI tools like high-risk substances. We might eventually need a warning label similar to those found on cigarette boxes. It would state clearly: Improper usage of AI can seriously disrupt your systems, pollute your codebase, and degrade your team culture.

AI tools are reshaping how we build software. However, they are not a silver bullet for developer productivity. They are a powerful multiplier that exposes both our engineering strengths and our technical flaws. To build sustainable software, we must remember that AI generates solutions, but human engineers must own the context. Keep refining your review processes and prioritize deep technical understanding over raw speed.